The industrial adoption of generative AI in 3D pipelines has long been throttled by a fundamental scaling bottleneck: the “resolution-compute” trap. Traditional probabilistic models often struggle to maintain geometric integrity as detail density increases, leading to “triangle soup” or fragmented meshes. Achieving Gigascale 3D generation requires more than just raw compute; it demands a fundamental shift in how spatial data is sampled and reconstructed.

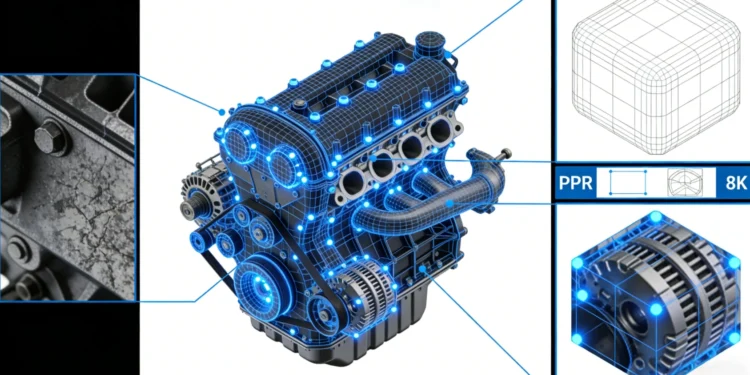

Neural4D addresses this through its self-developed Direct3D-S2 architecture, a NeurIPS 2025 breakthrough designed to handle 2048³ ultra-high-resolution native geometry. By moving away from brute-force estimation and toward native volumetric logic, the system ensures that high-fidelity outputs are not just visually impressive but structurally sound.

Deep Dive: Spatial Sparse Attention (SSA) vs. Brute Force

The core technical differentiator of the Direct3D-S2 engine is the Spatial Sparse Attention (SSA) mechanism. Conventional transformers suffer from quadratic complexity when processing dense 3D volumes. SSA optimizes this by focusing computational resources strictly on active geometric surfaces, ignoring empty spatial voxels.

⚡ 12x Inference Efficiency: SSA enables a massive leap in performance, delivering inference speeds roughly 12 times faster than current industry standards.

🎯 Volumetric Precision: Unlike models that guess depth from flat profiles, the Direct3D-S2 engine processes the full volume to ensure manifold geometry.

⚡ Computational Overhead Reduction: By prioritizing sparsity, the system generates complex anatomical or mechanical structures without the typical lag associated with high-poly counts.

The industry’s move toward spatial intelligence for production-ready 3D AI highlights the need for deterministic algorithms that ensure mesh integrity from the first inference.

Eliminating Topological Artifacts: From Cloud to Engine

For enterprise-level integration, a model’s value is dictated by its “readiness”—the amount of manual cleanup required before it enters a game engine or a simulation environment.

- Watertight Mesh Generation: Direct3D-S2 is designed to output mathematically watertight geometry. This eliminates the need for manual hole-patching, making files immediately compatible with 3D printing slicers or physics engines.

- Quad-Dominant Edge Flow: Rather than producing chaotic triangular meshes, the system generates clean topology with a focus on quad-dominant structures. This ensures the mesh is “engine-ready” and easy for technical artists to manipulate.

- PBR Material Integrity: The system calculates and outputs accurate Normal, Roughness, and Metallic maps alongside the geometry. This ensures deterministic output where assets react correctly to dynamic lighting in Unity or Unreal Engine.

Neural4D-2.5: The Multi-Modal Feedback Loop

To bridge the gap between AI generation and specific project requirements, Neural4D-2.5 introduces a conversational multi-modal layer. This allows developers to use natural language instructions to perform precise refinements on the generated mesh.

Instead of a “blind” regeneration that resets the entire model, this feedback loop allows for strict adherence to prompt and iterative fine-tuning of proportions or textures. This capability significantly reduces the hallucination rate and aligns the output with professional production standards.

Conclusion: Architecting the Future of Spatial Computing

The shift from experimental AI to industrial-grade 3D production requires a move toward Direct3D-S2 architecture. By optimizing the underlying volumetric logic and reducing computational overhead, Neural4D provides a scalable solution for game studios, e-commerce platforms, and 3D printing communities.

For organizations looking to automate their asset pipelines, the Sparse Volumetric API offers a robust path toward integrating Gigascale 3D generation directly into existing enterprise workflows.